What is Phase?

Even if you’re relatively new to the world of recording and music production, you’ve probably heard or read phrases such as “flipping the phase,” “phase shift,” “phase shifter,” and “out of phase.” The operative word in all of these is “phase,” and it’s a critical one to understand for many different reasons. In this article, we’ll take a look at what it all means.

First Things First

Before we get into phase, it’s helpful to first talk about sound waves. Sound waves are created by the disturbance of molecules in the air, causing a fluctuation in air pressure. To mix metaphors, if a tree falls in the forest and nobody hears it, it will still create sound waves.

As the waves coming from the sound source (the source of that disturbance) move through the air, the molecules come together and spread apart — a phenomenon referred to as compression and rarefaction. A complete wave cycle consists of one compression and one rarefaction. The higher the pitch of the sound, the faster each cycle occurs.

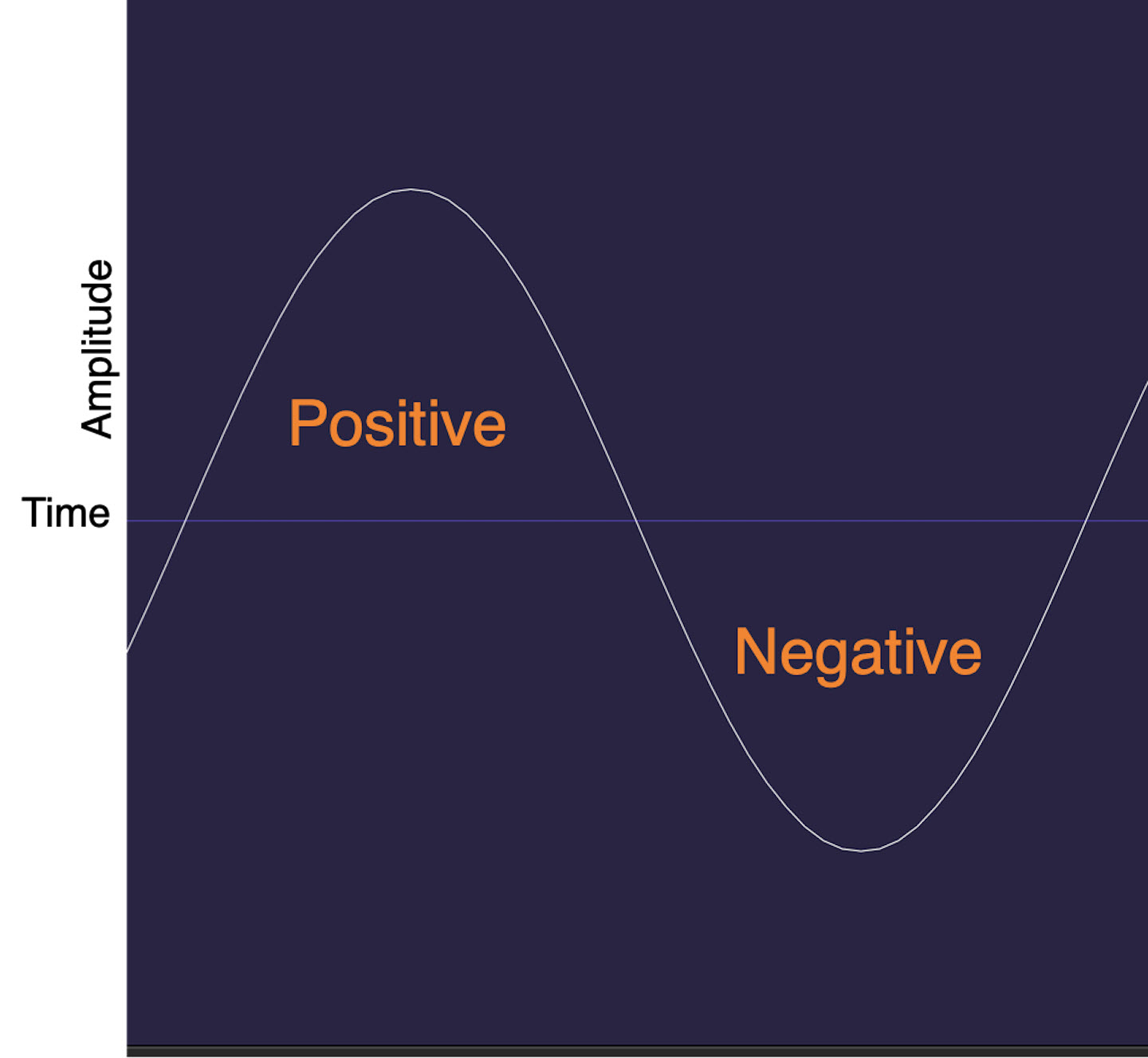

The duration of the cycle is called the wavelength. Once a microphone (or other transducer — a device that changes energy from one form to another) captures a sound wave and converts it to an electrical signal, you can refer to the compression part of the cycle as the “positive” part of the signal and the rarefaction as the “negative” part.

The illustration below shows a particular kind of waveform called sine wave. This simple waveform is used for test tones and is handy for demonstration purposes because it’s regular and symmetrical. What you see here is one cycle of a sine wave. Time is the horizontal (X) axis, and amplitude is the vertical (Y) axis. As you can see, it travels upwards (into the positive portion) for a particular period of time and then returns to zero before continuing its same journey downwards (and then on into the negative portion) over that same period of time.

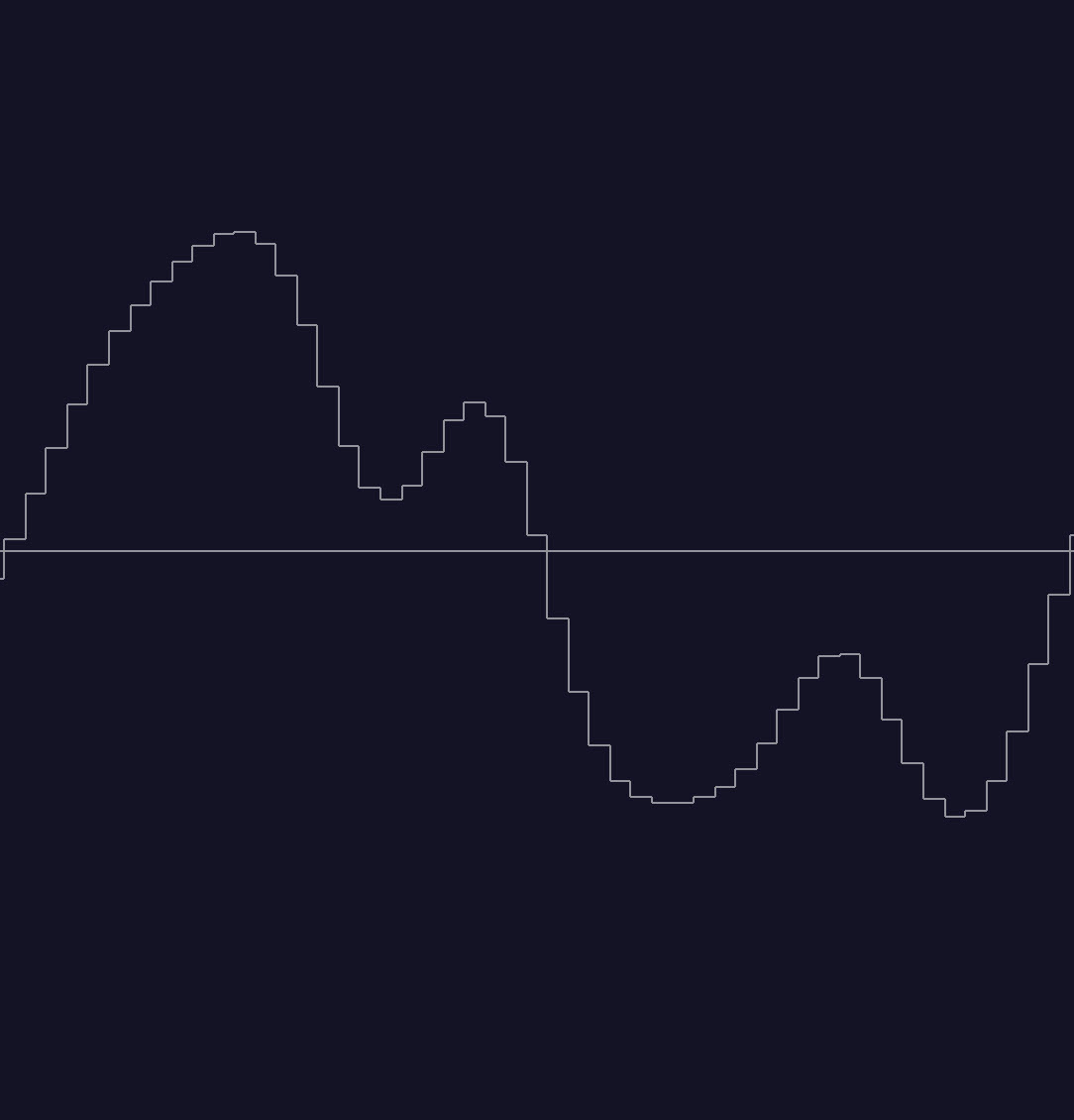

In contrast, here’s a waveform from a guitar recording. As you can see, it has a more complex shape, reflecting the fact that it’s a more complex sound.

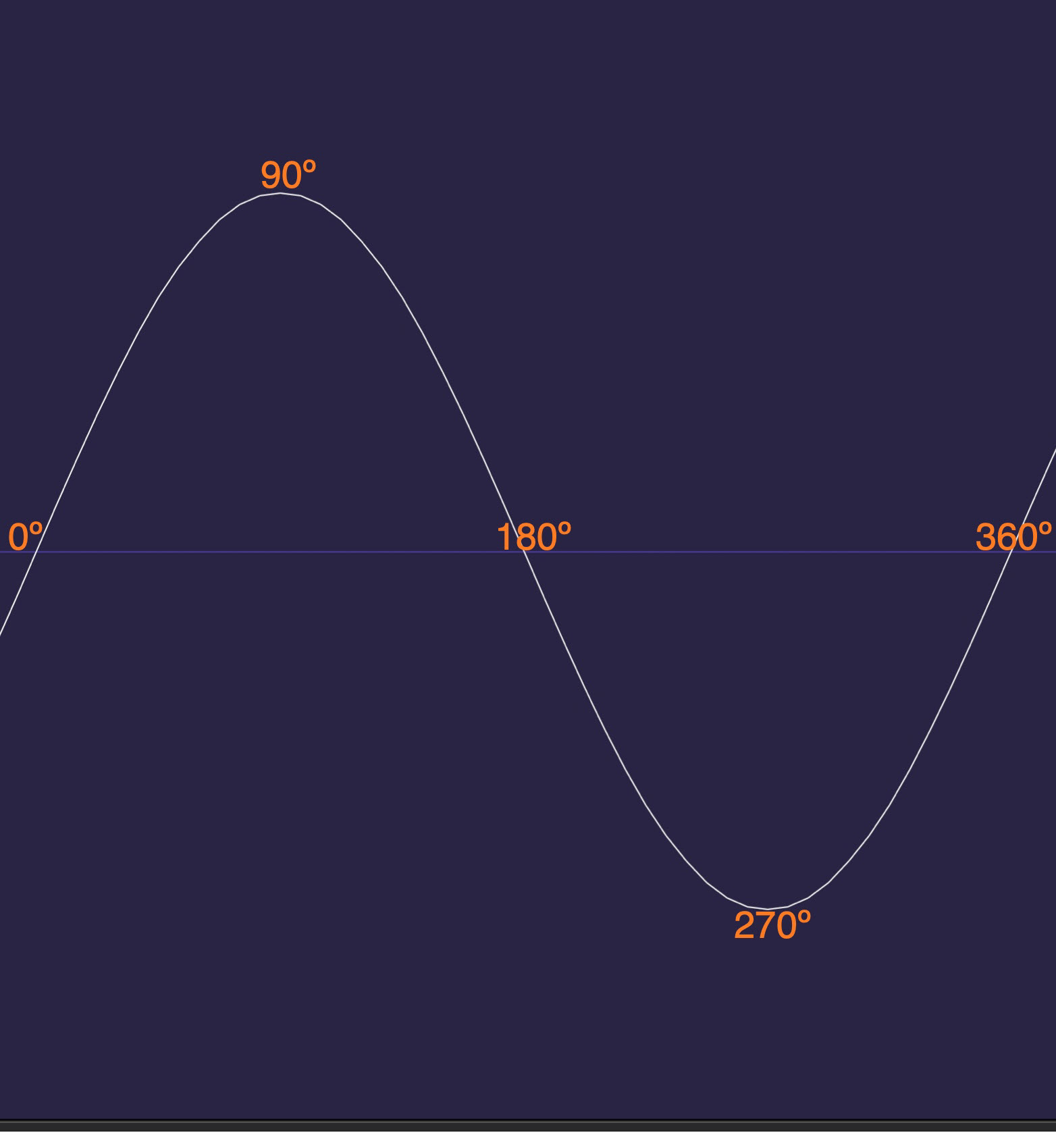

The cycle of a sound wave is 360 degrees, with the midway points indicated in the diagram below.

When Wave Meets Wave

Phase doesn’t become critical until you try to play back two recordings captured simultaneously from the same source, but by different mics (or by a mic and a direct box).

Let’s say you have a recording of a guitar. If you were to duplicate it to another track in your DAW and hit play, the two tracks would start at precisely the same time and reinforce each other, causing the sound to increase. That’s called constructive interference.

But if you play them together and one is delayed, even by a tiny amount, a phase shift occurs. When that happens, it’s considered destructive interference. Instead of reinforcing one another, the two work against each other, resulting in a phenomenon called comb filtering, in which certain frequencies in the combined signal cancel one another. Where this cancellation is partial, the level of the affected frequencies is reduced; where it’s total, those frequencies drop out altogether.

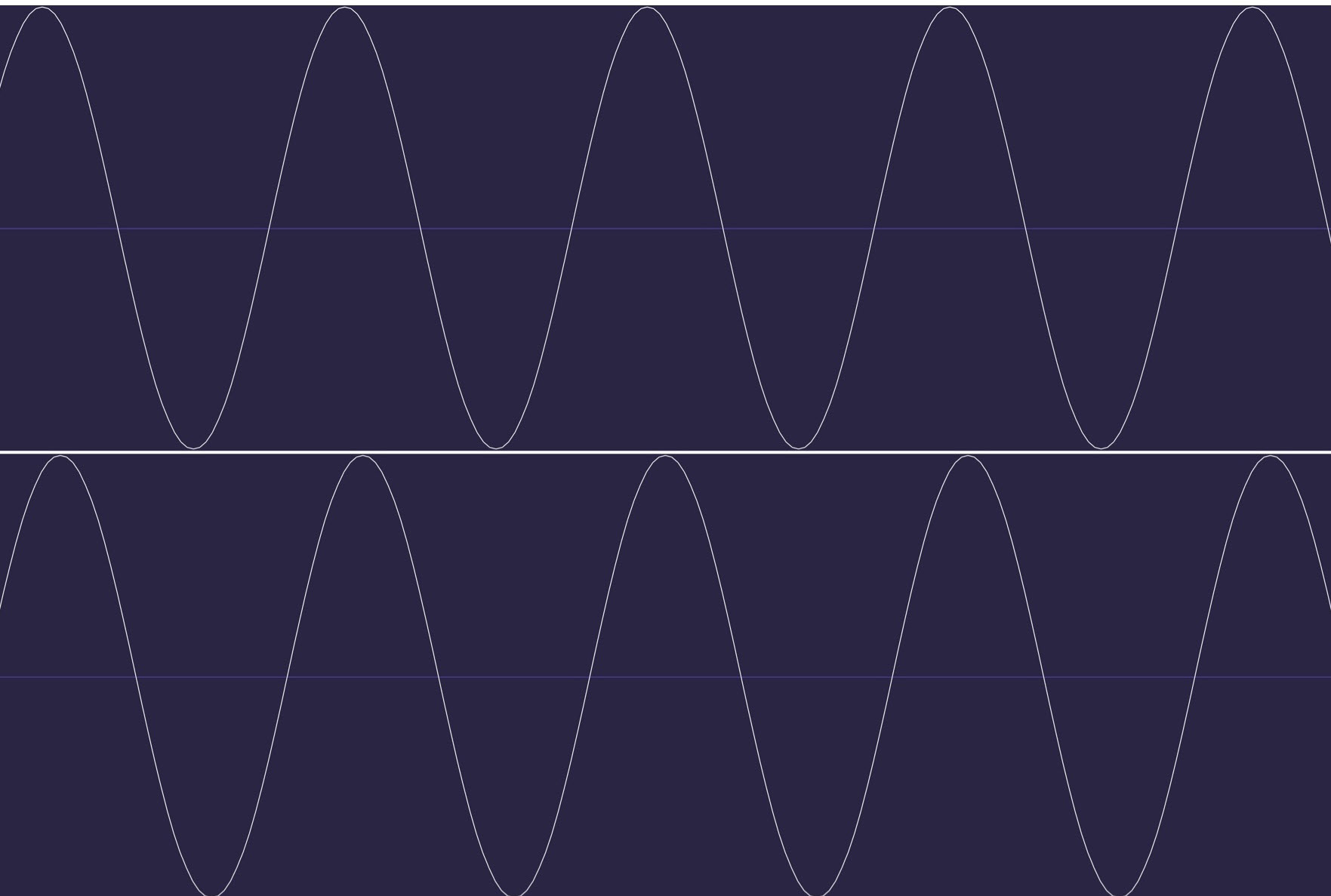

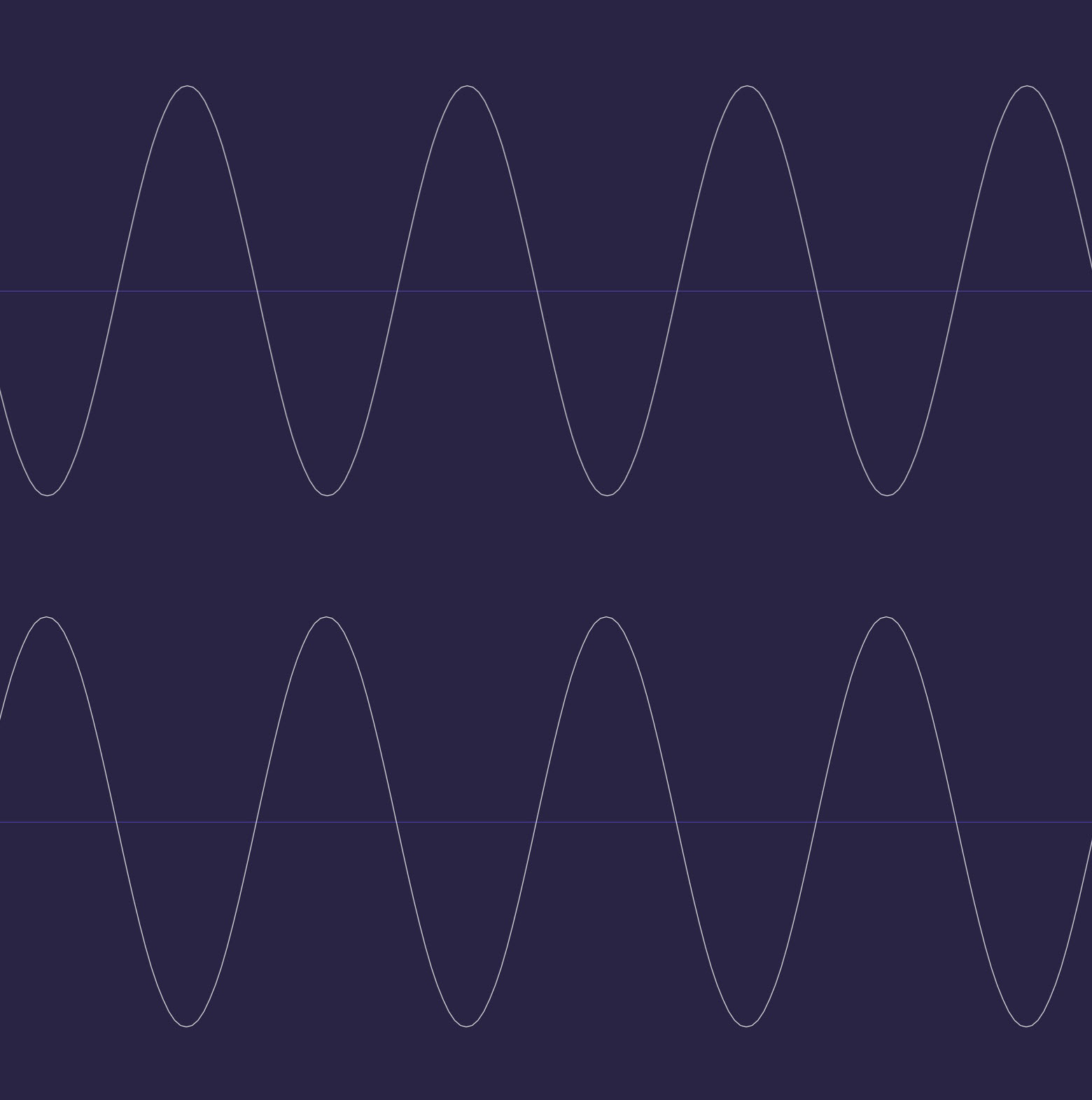

Here you see two sine waves, with the bottom one very slightly delayed. Even such a minute timing difference would be enough to cause comb filtering.

The sonic result of comb filtering is a thinning out of the audio and less clarity, and sometimes a kind of weird timbre to the sound that could best be described as “washy.” Comb filtering is at its most obvious when listening in mono — that’s one of the reasons why it’s essential to check your mixes in mono. Phase issues that you don’t notice in stereo (or surround) will be much more prominent in mono.

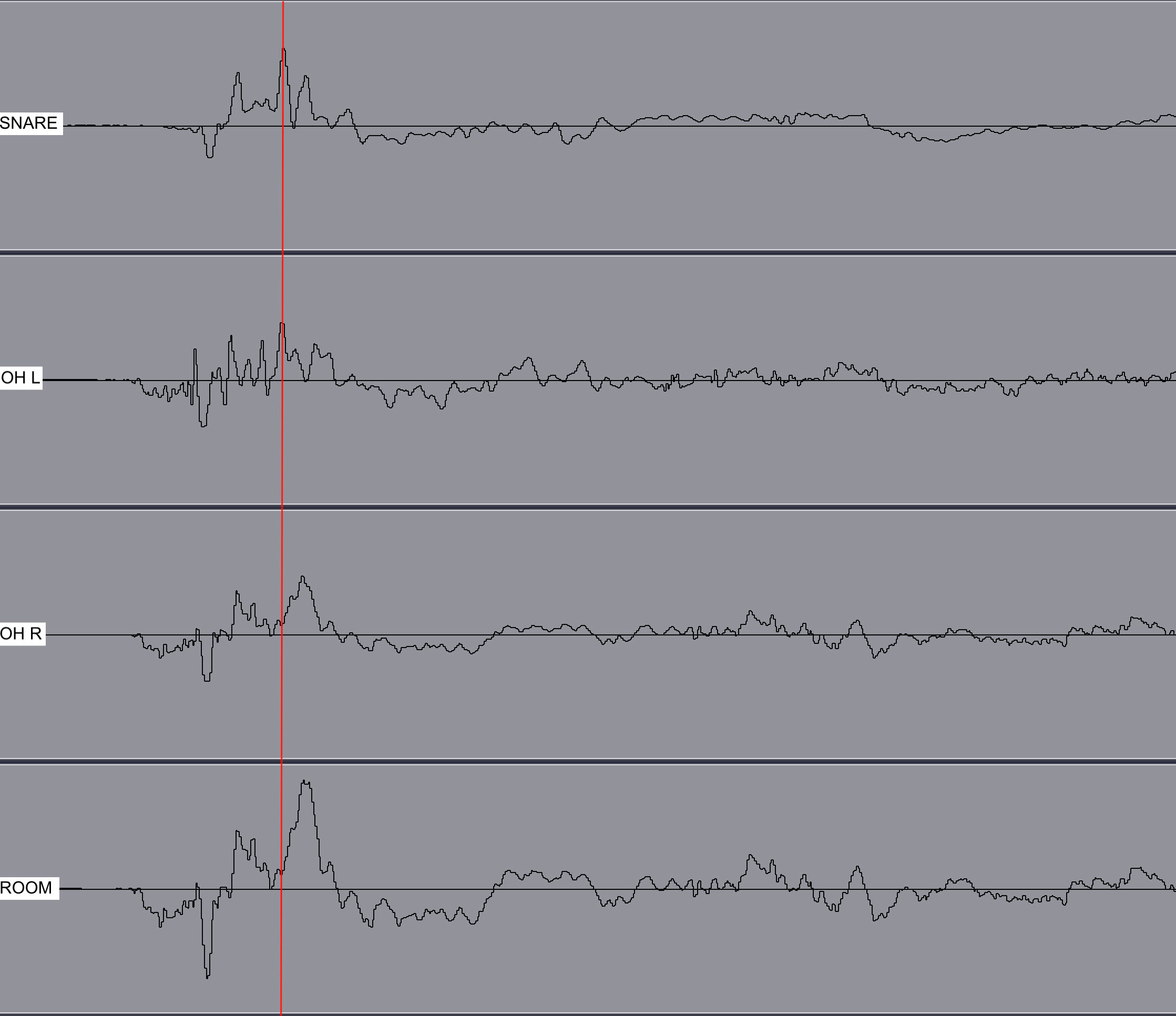

When you use more than one mic to record the same source, the different distances that the sound waves must travel to reach each mic is what causes their start times to vary. In the screenshot below, the peak of a snare hit is highlighted in the snare (top) track. Look at the other tracks, and you’ll see that the left overhead mic (OH) captured the snare at virtually the same time as the snare mic, but the right overhead and room mic were further away and thus slightly delayed.

In addition, some mics respond to changes in level faster than others — something that’s referred to as transient response. For example, a small-diaphragm condenser microphone usually has better transient response than a large-diaphragm condenser or dynamic mic. We’re talking about tiny differences, but it can be enough to create a phase shift between two tracks.

Cancel Culture

The ultimate in destructive interference occurs when two identical sound waves are 180º apart. That can cause them to cancel each other out entirely. Headphone manufacturers use that to their advantage as part of their active noise cancellation processes. Microphones on the outside of the headphones pick up background noise from either side, and the audio from one side is shifted 180º out of phase, causing the noise component to cancel itself out.

The screenshot below shows two waves that are 180º out of phase with one another. Notice how the positive and negative peaks are aligned.

Phase vs. Polarity

The word “phase” is often used interchangeably with the word polarity. The two terms are somewhat related, but substantially different in that polarity is an electrical phenomenon related to positive and negative wiring. You can end up with inverted polarity from a mis-wired mic cable or other piece of gear. When the polarity is inverted, wherever the waveform was positive, it flips to negative or vice versa, which means that it shifts by a full 180º. As we’ve seen, phase, on the other hand, refers to time differences between sound waves.

This next illustration presents a small segment of a waveform (on top, shown in blue) with its polarity inverted (on bottom, shown in red). If you were to listen to these together in stereo, you’d hear a weak low-level signal, but if you listened to them in mono you’d hear … absolutely nothing.

The Phase Button

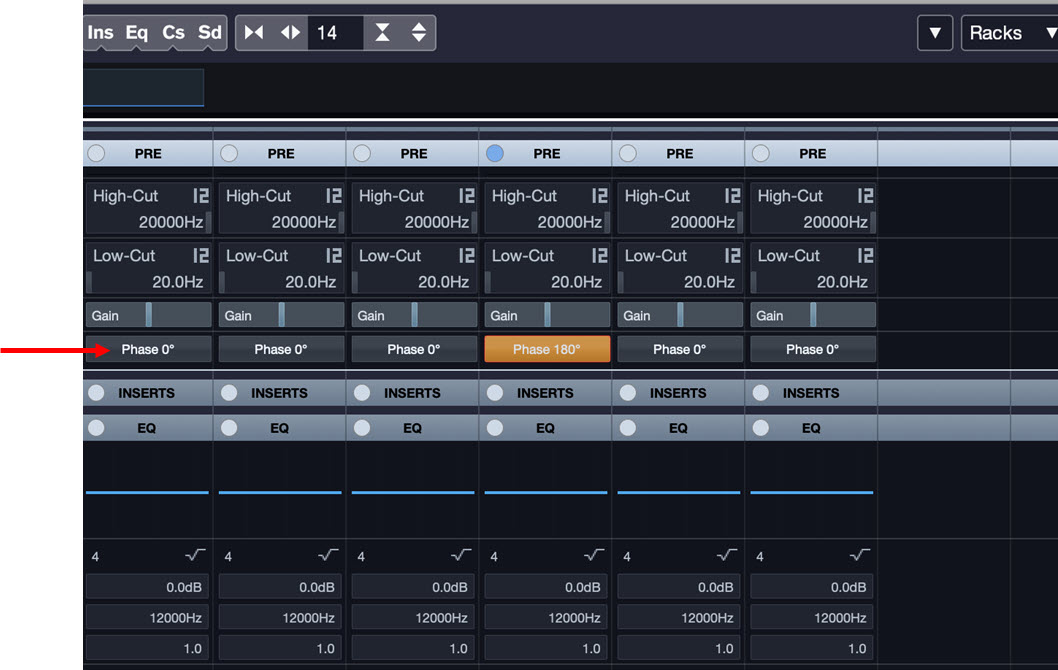

In a multi-mic recording — particularly of a drum kit where there are six or more open mics — there will inevitably be phase issues, based on the different distances between the mics and the various drums and cymbals.

To deal with this, engineers usually turn to channel “phase” buttons (which are actually “invert polarity” buttons) commonly offered by DAWs, mixers and audio interfaces, switching them in and out on various combinations of the mics. It’s not that any of the tracks are 180º out of phase, but sometimes they end up closer to being aligned when you completely invert them. It’s always helpful to check. All you have to listen to different combinations of the mic channels (usually the bass drum channel against the others, and then the snare drum against the others), hit the phase button and listen. If it sounds thinner, simply turn it off; if it sounds bigger or better, leave it on.

By the way, if you want to use two mics to make a stereo recording, you can choose from several different coincident pair stereo miking techniques, designed to capture a stereo image without any phase problems. Do an online search for the terms “X-Y,” “ORTF” or “mid-side miking” to learn more.

Don’t Get Phased

The bottom line is to be aware of phase whenever multiple mics or devices are used to capture the same sound source. You can often fix phase problems on a track that’s already been recorded by manually sliding it in time in your DAW to align it with another track, though you’ll need to zoom in quite far to be able to line them up accurately.

In addition, always be mindful of possible polarity reversals when connecting different pieces of gear. If something sounds thinner than it’s supposed to, try flipping the invert phase button — sometimes simply labeled “phase,” or indicated with a null symbol (“Ø”) on your audio interface or mixer to see if that improves it significantly. If so, you might have a cable or even a piece of gear that got accidentally wired backward.

Fun with Phasing … and Flanging Too

Being out of phase isn’t always a bad thing. Manufacturers of hardware effects processors and pedals, as well as developers of plug-ins, deliberately utilize phase differences and comb filtering to create effects called phase shifters and flangers. A phase shifter duplicates the incoming signal and delays it slightly, then adds modulation from a low frequency oscillator to give it a whooshy-sounding motion.

Flangers are similar but use an even shorter delay between the incoming and duplicated signals. The flanger originated in the late 1950s. Engineers discovered that if they played the same recording back on two tape recorders and slowed one down slightly by grabbing the flange of the tape reel, they got a cool effect. And you know what? It’s still cool to this very day.

Want to learn more about using the phase button in live sound? Check out this blog posting.

Check out our other Recording Basics postings.

Click here for more information about Steinberg Cubase.